From bioprinting to laboratory automation, read about the technologies that are revolutionising science.

A “disruptive technology” refers to an innovation that operates in a superior way to other products on the market, which leads to significant, usually positive change.

Disruptive technologies are having a profound impact on many environments; from our homes to our cities, technology is changing the way we operate, the way we communicate and the way we work.

The laboratory is an environment which is also subject to this change; it has progressed substantially after the gradual implementation of disruptive technology seen this past decade.

AI, Automation and other emerging technologies are connecting the laboratory, creating a more efficient, productive environment, one which will not only alleviate the burden on scientists but also increase discovery yield.

Other notable technologies are changing the way we treat patients, changing the way we combat issues that were previously deemed to be out of our control.

New technology like bioprinting and gene editing tools are proving that human capability extends as far as we allow it.

Here are four disruptive technologies that are revolutionising laboratory practices and changing the way modern science gets conducted.

1) Automation

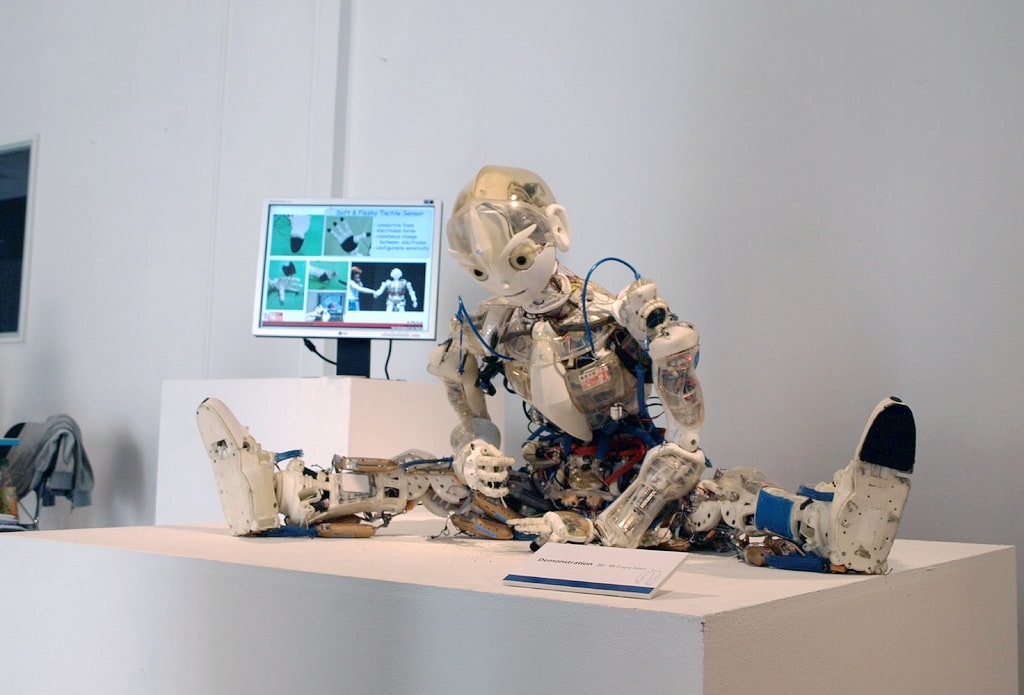

(Image Source: Wikipedia)

Automation is the most obvious area of change in the laboratory.

The most commonly used form of automation to date is the Laboratory Information Management System (LIMS) and the Laboratory Execution System (LES); both of which are software-based solutions that are evolving as the environment and demands of researchers change.

The LES, for example, is a dynamic solution that allows researchers to implement IoT into the lab without enormous costs.

By integrating an LES system into the lab, scientists can connect existing laboratory devices and machinery to the LES device, which allows scientists to collect data directly from the machine in an online format.

This system’s capabilities facilitate a more significant connection in the laboratory, saving researchers time and effort.

Automation naturally prompts questions about how data should get stored in the digital age. As a result, scientists are increasingly looking to an electronic lab notebook, to store their data on a centralised platform.

The other increasingly common form of laboratory automation is collaborative robots (co-bots).

Co-bots are small, lightweight machines designed to excel at repetitive tasks.

Their size and manoeuvrability allow them to get deployed with ease for applications in tight spaces, their ability to accurately sense movement and adjust speed accordingly, makes them well-suited to scientists wanting to optimise space in the laboratory.

Co-bots can perform a high volume of repetitive tasks at a fast rate, with a higher level of consistency than humans are capable of, reducing the risk of human error.

These features make co-bots well suited to managing tasks that were previously in the domain of the scientist, maximising researcher time and potential in the laboratory.

These aren’t the only forms of laboratory automation; it is expected that most objects in the laboratory will become autonomous in the future, to streamline the experimental processes.

2) AI and Machine Learning

The full potential of automation cannot get reached without being used alongside some form of AI or machine learning technology – a key component required to make a smarter laboratory ecosystem.

AI in laboratories generally comprises of statistical algorithms that learn with experience, which means that the more experiments get conducted with AI, the more accurate the predictions become.

With this, arises the ability to have initial information on the likely outcome of the experiment, allowing scientists to maximise both materials (and therefore cost) and their time.

AI provides a systematic, less biased analysis that can get utilised when planning future experimentation, in this way AI can aid the researcher from the initial phases of the experiment through to the conclusion; streamlining the experimental process and driving innovation forward as a result.

Machine learning is improving the accuracy of modern medicine.

So-called “precision medicine” uses recorded data combined with machine learning predictive capabilities to determine which treatments are best suited to individual patients.

This technology can capture individual variability in genes, function and environment, helping doctors make critical decisions on treatment, maximising medicine and tailoring treatment to meet the needs of the individual rather than just the disease.

Some have questioned whether the increased adoption of automation and AI will result in the displacement of jobs. Yet, for the area of research and development, it is expected that these technologies will alleviate the burden of scientists and augment human ability, resulting in a more productive, efficient laboratory environment.

3) Bioprinting

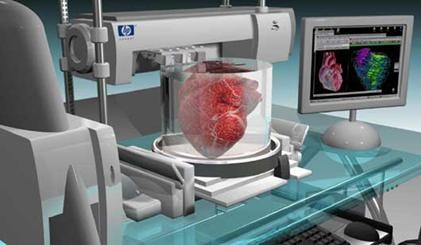

Bioprinting, one of the foremost “promising areas of regenerative medicine” has the means to revolutionise the way we treat patients in the upcoming decades.

Similar to 3D printing with metals and plastics, bioprinting is the process of using biological material to meticulously create functioning replicas of organs and other features of the human body.

Layer by layer biological material gets built up to form the desired organ, which can then get transplanted into a patient.

This organ can also get built out of the patient’s own cells, which reduces the risk of rejection as the cells are not foreign.

With this technology, we can not only replace failing organs but also make them more resistant to infection and potentially embed digital technologies into cell tissue to increase our abilities.

There has been substantial development of bioprinting over the past decade, but there is still a way to go.

Currently, it is still not able to print full-size complex organs like the heart or the liver as they require far more attention and effort to create.

April 2019 saw the first breakthrough of printing more complex organs as a group of scientists in Tel Aviv were able to construct a miniature, beating heart.

The opportunities with this technology are endless, and we can expect it to significantly alter the way we treat patients in the coming decades.

4) Genome editing

Genome editing refers to the technologies which give scientists the ability to modify an organism’s DNA.

With this technology, genetic material can be added, removed or altered.

CRISPR is one example of gene-editing technology which has become popular in recent years because of its speed and efficiency.

With this technology, scientists have discovered a more natural way to harness an oddity in bacterial immune systems to allow scientists to manipulate and edit DNA.

Scientists using this technology can control which genes are expressed in edited organisms.

Undesirable traits, such as hereditary genetic diseases like Huntington’s disease, can get removed at a faster rate with a higher level of precision and accuracy with CRISPR technology.

In this way, genome editing has the potential to cure 6,000 known genetic diseases.

Genome editing technology offers endless opportunities, yet with the ability to edit the genetic codes of animals, plants and even humans, inevitably amounts to considerable risk.

Scientists are still working on determining whether this technology is safe and effective for use on humans.

Contributing to this, there needs to be consideration of ethical concerns in human alteration; this technology could prove a slippery slope leading to the desire to genetically alter humans to make them stronger, smarter etc.

Ethical concerns were raised in 2018 after a Chinese scientist was jailed when he reported that he had created the first HIV resistant humans after editing genes using the CRISPR technique.

This action was deemed by the global scientific community as “profoundly disturbing” and was found to violate ethical regulations.

For all the benefits this technology creates, without tight regulations, it makes dystopian worlds like that described in Huxley’s “Brave New World” possible, and probable.

However, despite this risk, gene editing remains to be a useful tool to tackle viruses and diseases.

A new approach to dealing with the Zika virus (which is commonly spread through mosquitoes) was formulated after two scientists from the University of California discovered that CRISPR / Cas 9 could be used to alter features of the mosquito, thus minimising potential to carry the virus.

Science Daily reported that scientists were able to postpone the mosquito’s development, shorten their lifespan and alter egg development and fat accumulation.

This success demonstrates how scientists can harness the abilities of gene editing to combat some of the world’s deadliest diseases.

Conclusions

Institutions, both industry and academia, have seen the strategic importance of disruptive technologies in the laboratory environment.

With the implementation of this technology, it is paramount that scientific progression does not come at the expense of the qualities that make us human.

These technologies in many ways provide a “shortcut to evolution”, an unnatural process, and as some perceive, akin to “playing God”.

Practising science, with no regard to morality, is a slippery slope that will likely have repercussions if not handled correctly.

However, many initially perceived dangerous technologies have helped society to progress in a positive way.

The internet, for example, is a disruptive technology that has helped the accumulation and spread of knowledge.

Morality should, of course, be a key consideration, but it should not halt progression.

Opportunities created by the implementation of disruptive technologies will perpetuate further waves of innovation and discovery in science, creating opportunities for future generations.

About the Author:

Phoebe Chubb is a third-year BSc student at the University of Exeter with a keen interest in documenting the impact technologies have on the workplace. She has published articles on Hackernoon, Pie News, IoT tech News and many more.